-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion RDNA 5 / UDNA (CDNA Next) speculation

Page 106 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

luro

Member

Eh, they would need $200k net income to make up to the costs, not in sales. So the actual units number needs to be much higher.If, say, the additional costs of bringing AT0 to market for gamers is $200 million. AMD would need to sell 100,000 AT0s at $2000 to make up costs. Would it really be impossible to make those numbers?

There are like a hundred million PC gamers worldwide. Many of them can easily sell their previous nvidia flagship and buy the new AMD flagship for zero cost. There are at least 100,000 wealthy AMD fanboys who would buy it even if AT0 is dogsh1t.

There is no economic or logical reasons not to bring AT0 consumer gaming version. Its not being made because the market is a cartel. You will have a real market once the Chinese enter it.

But it’s not part of AMDs strategy to sell at break even.

Win2012R2

Golden Member

They have not got x86, so that cuts them off 99% of "desktop" gaming market which buys GPUs.Apple is the market leader and they've fruitlessly tried to make their fatty iGP configs relevant for a while now.

adroc_thurston

Diamond Member

We ain't talking desktops here since Intel does not exist in discrete graphics.They have not got x86, so that cuts them off 99% of "desktop" gaming market which buys GPUs.

Win2012R2

Golden Member

Apple is completely separate market that lives by its own rules, it cracks me up watching people compare their CPU vs x86, it's literally Apples and oranges.We ain't talking desktops here since Intel does not exist in discrete graphics.

adroc_thurston

Diamond Member

They sell computers.Apple is completely separate market that lives by its own rules

They sell PCs? With much faster CPUs.it cracks me up watching people compare their CPU vs x86, it's literally Apples and oranges.

It's very plain and simple, they're the gold standard of client CPU perf/bl/yaddayadda.

Win2012R2

Golden Member

They sell computers.

Rolex sells watches.

Ferrari sells cars.

Swarovski sells diamonds.

etc

They sell walled garden devices that look like a PC, but this ain't a Personal Computer: Macs have 10% "PC" market share, it's a niche even for Apple.They sell PCs? With much faster CPUs

btw I am not an Apple hater - I use iPhone/iPad, but won't use Macs even if they were free.

adroc_thurston

Diamond Member

Apple sells PCs.Rolex sells watches.

Ferrari sells cars.

Swarovski sells diamonds.

Mac share is growing and it's a PC.They sell walled garden devices that look like a PC, but this ain't a Personal Computer: Macs have 10% "PC" market share, it's a niche even for Apple.

They're very good hardware. Shame about macOS.

Win2012R2

Golden Member

It's a very niche (10%) x86 incompatible device in a laptop form factor that has got miniscule market share and lots of well established software can't run on it. It's mostly bought as a status symbol, which is what allows Apple to extra extra premium pricing.Mac share is growing and it's a PC.

It's not a PC, just like my iPad isn't - even if I add keyboard to it, effectively current Macs are iPads that run more serious OS with slightly bigger software choice.

So Macs are - iPads with built-in keyboard.

adroc_thurston

Diamond Member

No, they're gaining normal people share.It's a very niche (10%) x86 incompatible device in a laptop form factor that has got miniscule market share and lots of well established software can't run on it. It's mostly bought as a status symbol, which is what allows Apple to extra extra premium pricing.

Low-cost Macbook will push that further.

You're being obtuse.It's not a PC, just like my iPad isn't - even if I add keyboard to it, effectively current Macs are iPads that run more serious OS with slightly bigger software choice.

So Macs are - iPads with built-in keyboard.

macOS is a real OS. The UX just kind of sucks.

dangerman1337

Senior member

Not exact numbers but more like AT0 costs maybe 330 (used this with 764mm2 GB202 die size: https://stech.tech/die-per-wafer-calculator/) per chip, add a 50% margin and you get 500 USD or so. So you'd have to sell 500,000 AT0 SKus to gamer to start seeing some noticeable profit or so. Ideally a million of AT0 to gamers. I mean AT0 dGPU that's cut down like to 154CUs doesn't need to be sold at $2000, like maybe $1500 with 36GB of VRAM if DRAM costs go down substantially by the time RDNA 5 is due?If, say, the additional costs of bringing AT0 to market for gamers is $200 million. AMD would need to sell 100,000 AT0s at $2000 to make up costs. Would it really be impossible to make those numbers?

There are like a hundred million PC gamers worldwide. Many of them can easily sell their previous nvidia flagship and buy the new AMD flagship for zero cost. There are at least 100,000 wealthy AMD fanboys who would buy it even if AT0 is dogsh1t.

There is no economic or logical reasons not to bring AT0 consumer gaming version. Its not being made because the market is a cartel. You will have a real market once the Chinese enter it.

Big question; is selling a million of AT0 feasible to gamers? That should be the question. If so then AMD should do both a cut down a a full/few CU cut SKUs IMV (450W full-ish fat SKU would go nicely Vs Nvidia pushing 600 or more watts of a 6090 Ti).

Last edited:

Win2012R2

Golden Member

It is if the following minimum conditions are true:Big question; is selling a million of AT0 feasible to gamers?

1) at least 30% faster than 5090

2) 5090 is the max Nvidia offers (as it is now)

3) $1500 max price - sell direct as FE only if necessary

To make a hit they need to release it mid this year, or max for Xmas 2026.

I'll buy it.

Tachyonism

Member

Not a chance.To make a hit they need to release it mid this year, or max for Xmas 2026.

The best they can do is probably H2 2027, just before the 6000 series comes out.

adroc_thurston

Diamond Member

See? There is no market for a high-end Radeon.It is if the following minimum conditions are true:

1) at least 30% faster than 5090

2) 5090 is the max Nvidia offers (as it is now)

3) $1500 max price - sell direct as FE only if necessary

To make a hit they need to release it mid this year, or max for Xmas 2026.

I'll buy it.

Selling more for less is just not a sustainable market position anyway. They're not a charity.

Krteq

Golden Member

These 2 conditions simply can't coexists 🙄...

1) at least 30% faster than 5090

2) ...

3) $1500 max price - sell direct as FE only if necessary

MrMPFR

Senior member

Reply:But isn't that due to inherent bad cacheability of RT stuff? It's just too random, at least for puny caches

No the NVIDIA design is just very inefficient. Does thread coherency sorting (SER) and OMM but other than that not really any major changes since Turing.

Easiest fix for everyone is abandoning Execute Indirect. Only GPU Work Graphs with DXR 1.3. Big work graph for the entire RT pipeline from start to finish should tackle many of the problems: Improved occupancy, no barriers, bubbles or empty launches, massively improved coherence etc...

SER is a band aid in comparison.

All NVIDIA cards from Ampere and newer, and AMD RDNA 3-4 cards have RT fine wine waiting to be tapped.

Cards without SER stand to benefit the most, Ampere (if we ignore OMM) on NVIDIA side should be much closer to 40-50 series. RDNA 3 will benefit the most but RDNA 4 a lot as well.

If devs bother to rewrite RTGI pipelines on PC ports (unlikely) there's potential for massive perf gains in the short term, otherwise unfortunately this is post-crossgen and no earlier.

Extreme HW+SW co-design for GFX13 will unleash Work Graphs. While superior HW will still help short term in current and future DXR1.1-1.2 games this is what really matters for RDNA 5 PT long term.

dangerman1337

Senior member

Eh it'll be sold Mid 2027 to late 2027 due to things going on. Hopefully things all align by then if the OpenAI Circle jerk Bubble pops.It is if the following minimum conditions are true:

1) at least 30% faster than 5090

2) 5090 is the max Nvidia offers (as it is now)

3) $1500 max price - sell direct as FE only if necessary

To make a hit they need to release it mid this year, or max for Xmas 2026.

I'll buy it.

1250

Member

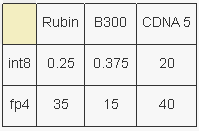

The effective training compute on an mxfp6 / mxfp4 arrangement will be something like

MI455X: 25 PFLOPS Rubin: 14 PFLOPS

Unless Rubin pulls some utilization miracle out of its hat, or FP6 doesn’t progress, (All sign point to stable performance) Rubin is totally cooked.

Less performance, less memory, more power, more cooling, more cost, almost certainly less reliability.

I can hear the chirping of the “but CUDA’s” getting softer and softer

(from reddit)

I can’t wait until Meta and Open AI unlock full training runs on FP6/ MXFP6 and FP4/ MXFP4

AMD hardware will be rocking the full 40 PFLOPS while Rubin is running between 16.66 and 35 PFLOPS.

The leaks about performance are going to be face melting.

only Inference or secret? just hype?

MI455X: 25 PFLOPS Rubin: 14 PFLOPS

Unless Rubin pulls some utilization miracle out of its hat, or FP6 doesn’t progress, (All sign point to stable performance) Rubin is totally cooked.

Less performance, less memory, more power, more cooling, more cost, almost certainly less reliability.

I can hear the chirping of the “but CUDA’s” getting softer and softer

(from reddit)

I can’t wait until Meta and Open AI unlock full training runs on FP6/ MXFP6 and FP4/ MXFP4

AMD hardware will be rocking the full 40 PFLOPS while Rubin is running between 16.66 and 35 PFLOPS.

The leaks about performance are going to be face melting.

only Inference or secret? just hype?

at $1500 only issue I see at the moment is the memory situation. I think it could end up around $2000.It is if the following minimum conditions are true:

1) at least 30% faster than 5090

2) 5090 is the max Nvidia offers (as it is now)

3) $1500 max price - sell direct as FE only if necessary

To make a hit they need to release it mid this year, or max for Xmas 2026.

I'll buy it.

If AMD releases an RTX pro 6000 competitor on RDNA5/UDNA with xGMI then Nvidia is in very serious trouble. Because thats the key thing missing on the Nvidia workstation cards. Give me 30% better perfomance than a 5090 with at least 96GB of GDDR7 for $5000-$8000 but with xGMI and I will buy 8 of them over 8 RTX pro 6000s. The biggest thing right now is actually memory. The memory issues arrived quite conveniently to prevent AMD from continuing to propel itself by offering more HBM or GDDR. At this point its a bit difficult but still you can see how AMD's MI400 HBM made Nvidia upgrade their capacities.See? There is no market for a high-end Radeon.

Selling more for less is just not a sustainable market position anyway. They're not a charity.

Last edited:

adroc_thurston

Diamond Member

Well it doesn't have xGMI.If AMD releases an RTX pro 6000 competitor on RDNA5/UDNA with xGMI then Nvidia is in very serious trouble.

Navi21 was the last part that had it.

No.At this point its a bit difficult but still you can see how AMD's MI400 HBM made Nvidia upgrade their capacities.

But speeds? Yeah.

They're not even gonna hit 11Gbps bins on launch which is very funny.

1250

Member

The consulting guy said that AMD is good at making realistic plans, but how many years in advance are these plans usually conceived?on launch which is very funny.

p.s. Nvidia expect that?(amd)

Last edited:

I think UDNA/RDNA5 will have UALink?? Like if AMD makes an RTX pro 6000 competitor with some sort of die to die communication Nvidia is in serious trouble. It doesnt have to be as good as a future Rubin RTX 7000 pro in terms of compute, it simply has to have enough memory and memory bandwidth and UALink. The community will eat them up and sort out most software issues in ROCm or Vulkan.Well it doesn't have xGMI.

Navi21 was the last part that had it.

For $5-10k such a GPU undercuts a lot of the need for more expensive datacenter cards especially with something like UALink. You could connect 8 GPUs in a single setup for ~1TB of VRAM on a highthroughput interconnect. I dont think Nvidia is keen on this happening with non-datacenter cards. But AMD doesnt have to work like that as their market share in GPUs and AI isnt that high. Not saying it would replace the MI400s and VR300s but it would offer a compelling self hosted quick prototyping solution for a lot of businesses, startups, researchers that would otherwise have rented out cloud compute. Thats a smaller market but one which has possibly the largest implications on long term ecosystem support.

But Nvidia could still surprise everyone and make the next RTX Pro 6000 replacement even better than anyone expected.

Helios already has more VRAM(31TB vs 20.7TB) and basically the same memory bandwidth(22TB/s vs 19TB/s although Nvidia had started out with 13TB/s) as the VR NVL72. And MI500 with UDNA will even be more competitive on the memory front. All AMD has to do is produce a halo card on RDNA 5(AT0) for gaming and reuse it for an RTX pro 6000 competitor, its really that simple. People are going to go to whoever has the most memory, with AI driven development a lot of software moats can be fixed by the community.No.

But speeds? Yeah.

They're not even gonna hit 11Gbps bins on launch which is very funny.

marees

Platinum Member

i believe helios/mi455 already supports UA linkI think UDNA/RDNA5 will have UALink??

but the protocol or hardware itself is not mature. hence you may have to wait for mi500 (cdna 6) for true ua link

marees

Platinum Member

whatever you are saying is what I think TinyGrad is trying to do.I think UDNA/RDNA5 will have UALink?? Like if AMD makes an RTX pro 6000 competitor with some sort of die to die communication Nvidia is in serious trouble. It doesnt have to be as good as a future Rubin RTX 7000 pro in terms of compute, it simply has to have enough memory and memory bandwidth and UALink. The community will eat them up and sort out most software issues in ROCm or Vulkan.

For $5-10k such a GPU undercuts a lot of the need for more expensive datacenter cards especially with something like UALink. You could connect 8 GPUs in a single setup for ~1TB of VRAM on a highthroughput interconnect. I dont think Nvidia is keen on this happening with non-datacenter cards. But AMD doesnt have to work like that as their market share in GPUs and AI isnt that high. Not saying it would replace the MI400s and VR300s but it would offer a compelling self hosted quick prototyping solution for a lot of businesses, startups, researchers that would otherwise have rented out cloud compute. Thats a smaller market but one which has possibly the largest implications on long term ecosystem support.

But Nvidia could still surprise everyone and make the next RTX Pro 6000 replacement even better than anyone expected.

Helios already has more VRAM(31TB vs 20.7TB) and basically the same memory bandwidth(22TB/s vs 19TB/s although Nvidia had started out with 13TB/s) as the VR NVL72. And MI500 with UDNA will even be more competitive on the memory front. All AMD has to do is produce a halo card on RDNA 5(AT0) for gaming and reuse it for an RTX pro 6000 competitor, its really that simple. People are going to go to whoever has the most memory, with AI driven development a lot of software moats can be fixed by the community.

not sure what is AMD's plan

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-