DisEnchantment

Golden Member

Speculate at will

Last edited:

BTW, if it is true and L3 die is below, then why not make SRAM amount > 64 MB? There would be room for more on the die.

So 5.2 max boost is pretty much confirmed. If thermal restraint was lifted, why is boost still .3 Ghz down from non V-cache model??

Or 3.8 ns for Telum [= 32 MB private L2$¹]Roughly 5.4ns for M1 [= 12 MB shared L2$] vs 2.4ns for 7950X [= 1 MB private L2$].

In client (where you can probably only afford design with modification for both mobile and desktop) I'd much rather have an SLC instead of L4.It's possible that they may decouple it into an L4 cache to allow the first 32MB of L3 to have a lower latency, at the expense of a few extra cycles of RAM latency.

That's a giant L3 you're not gonna see in a long time...In client (where you can probably only afford design with modification for both mobile and desktop) I'd much rather have an SLC instead of L4.

Each layer of cache adds extra complications, more tags to keep track of, etc.

That's one of the reasons Apple and Qualcomm forego L3. Unless you can afford to make the L3 big enough (say 24GB - 32GB+) you might be better off with bigger shared L2 caches and a SLC, that also benefits the GPU, NPU ...

Maybe not a L3 but it could be an L5 made up of really fast highly parallel Optane memory.That's a giant L3 you're not gonna see in a long time...

NahMaybe not a L3 but it could be an L5 made up of really fast highly parallel Optane memory.

Yeah, that's why you're not gonna see L3 on qualcomm / apple SoCs.That's a giant L3 you're not gonna see in a long time...

Well, yeah that and how big or high Gigabyte size L3s would be. It's absurd.Yeah, that's why you're not gonna see L3 on qualcomm / apple SoCs.

At least until they are 90% mobile focused. Apple's rumored server SKUs might actually have L3 and it might trickle down to higher end desktop / M Max SKUs

From my layman PoV investing into increasing L2 size does not yield much in terms of general-purpose IPC. Zen 4 doubled the Zen 3's relatively small 512kB L2. Yet, it was trailing the rest of "major IPC contributors" with sub-2% IPC points. Intel went the same odd L2-growing route since Willow... 512kB -> 1.25MB -> 2MB -> 2.5/3MB.If Zen continues to get wider and slower, than I can see them using the reduced clockspeed targets to double the size of the L2 while keeping the same number of cycles of latency. That should help with throughput a bit.

It'll be $450 for a few reasons. Competition isn't one of them.CopeFrameX is saying on Xitter that 9800X3D is looking strong in reviews. "Better than 8% in demanding scenarios".

Y'all dont think AMD will price this thing above $449, do you?

It'll be lowerSteve will run out of chart space for 9800x3d release i think it will look smt like this:

View attachment 110469

You will need ultrawide screen to see this chart. Trust me bro.It'll be lower

You will need ultrawide screen to see this chart. Trust me bro.

Even 10% is close to off screen on this chart xDMaybe in ACC or Flight Sim.

Not so sure on average.

Not an issue if you can view the chart in VR. Then just tilt your head a bit to the right and you will see the bar poking out in 3D.Even 10% is close to off screen on this chart xD

Oof yeah i wrote that without double checking. Obviously I meant 24 - 32+ MB. My badWell, yeah that and how big or high Gigabyte size L3s would be. It's absurd.

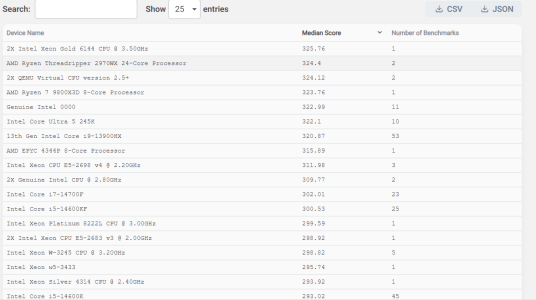

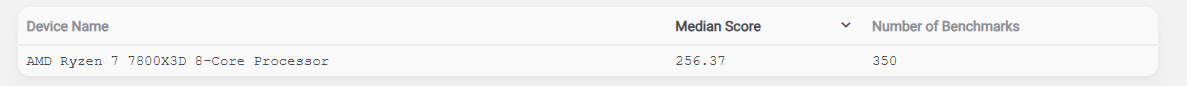

More optimzed V/F curve9800X3D Blender Open Data entry. 11% faster than 9700X. OC maybe? Or maybe its due to its 120W TDP vs the original 65W TDP of 9700X. Its massively faster than 7800X3D.

Blender - Open Data

Blender Open Data is a platform to collect, display and query the results of hardware and software performance tests - provided by the public.opendata.blender.org

View attachment 110463

View attachment 110464

No. But they won't price it any lower either.CopeFrameX is saying on Xitter that 9800X3D is looking strong in reviews. "Better than 8% in demanding scenarios".

Y'all dont think AMD will price this thing above $449, do you?

Y'all dont think AMD will price this thing above $449, do you?