SolidQ

Golden Member

3k$?Brother you're gonna be sooooo disappointed.

3k$?Brother you're gonna be sooooo disappointed.

The data reuse cache patent seems to be more tailored towards CPUs.AMD's been filing patents for data reuse cache + global VGPR-to-VGPR network but not sure if it's gonna solve the bottleneck. Not confirmed yet.

Halfed cache latency is very impressive!3. The method of claim 2, wherein the loading the data from the load response is performed in four cycles from the level of the cache system and the loading the data from the data reuse cache is performed in two cycles.

11. The processor unit of claim 10, wherein the data reuse cache is physically located closer to the execution unit on an integrated circuit than the load-store unit or the cache system.

Yeah that's an L0d for (probably) Zen7The data reuse cache patent seems to be more tailored towards CPUs.

Very interesting is claim 3:

- 4 cycles = L1-Cache (48kByte on Zen 5)

- 2 cycle = Data Re-Use Cache (maybe 16kByte?)

Halfed cache latency is very impressive!

This is realized like that:

The data re-use cache sits very close to the execution units and before the Load and Store system.

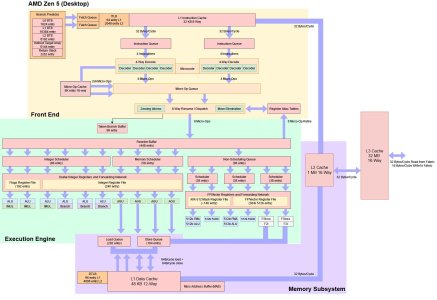

If looking at the Zen 5 core diagram, this Data Re-Use Cache must therefore sit inside to Execution Engine block or between Load/Store Units and Execution Engine.

View attachment 139237

AMD’s Ryzen 9950X: Zen 5 on Desktop

AMD’s desktop Zen 5 products, codenamed Granite Ridge, are the latest in the company’s line of high performance consumer offerings.chipsandcheese.com

It does mention parallel processor four times. I just assumed it would be used given all the weird design choices relating to register renaming, improved queue priority, OoO etc...The data reuse cache patent seems to be more tailored towards CPUs.

GPUs already have opreuse caches, they sit right behind operand collectors.AMD's been filing patents for data reuse cache

It might get used on GPUs. But the claims are very specific given the 2/4 cycles cache latency. GPU caches are not in that latency region. At least claims 1-9 are not applicable to GPUs. The other claims 10-20 might be used for CPUs and GPUs. But probably still more beneficial for CPUs, because the invention is primarily about latency reduction beyond and lower than the L1 cache. GPUs are much more latency tolerant, so the benefit of such an L0d is lower.It does mention parallel processor four times. I just assumed it would be used given all the weird design choices relating to register renaming, improved queue priority, OoO etc...

Here's the patent for how they avoid flooding that data reuse cache:

US20250217298A1 - Systems and methods for reducing cache fills - Google Patents

A method for reducing cache fills can include training a filter, by at least one processor and in response to at least one of eviction or rewrite of one or more entries of a cache, the filter indicating one or more cache loads from which the one or more entries were previously filled. The method...patents.google.com

It will run. But probably slower or as a tuned down version (like the FSR4 INT8 version on PS5 Pro).Unfortunately that likely means FSR5 won't be able to run on RDNA 4 cards.

I wouldn't be so sure. If it's very different even compared to current 50 series (due to architectural changes), it'll run horribly on RDNA4, worse than FP8 emulation, or AMD will have spend time making a separate RDNA 4 version.It will run. But probably slower or as a tuned down version (like the FSR4 INT8 version on PS5 Pro).

100%. If only I knew how GPGPU-sim worked. Then we could get somewhat reasonable estimates before launch.I think shared caches etc. like you mention with the other patents will yield in more performance uplifts than an L0d.

If we take into account the rest of gfx13's patent derived changes for RT Traversal, ignoring everything else, then I can see only one scenario: one where RDNA 5 dominates. In ray traversal throughput (at same raster) it should be able to destroy ALL existing generations, including current NVIDIA ones. In addition, factoring in the rest of the potential architectural changes should only extend the lead.

- I'll prob need to redo October analysis because it ain't complete at all, since many new patents have surfaced in the last 5 months.

As for RT shading portion many things will likely change such as cachemem and scheduling to name a few. In addition it seems like SWC is a dedicated block unlike NVIDIA SER (repurposes existing HW). As a result in existing PT games (DXR 1.2) RDNA 5 shouldn't have any problems winning at iso-raster. When it comes to a potential work graphs pivot for DXR 1.3, AMD and everyone else has untapped fine wine, but RDNA 5 much more so for reasons explained too many times to repeat.

Remember that RT is just one aspect of an architecture, and if we need to consider all potential changes with the nextgen Radeon. With this in mind architecturally it looks like RDNA 5 could be their most impressive product family since the R300 series (9700 pro). I'm talking about architectural sophistication and superiority here over existing products, not that they're gonna take the performance crown.

As for 60 series I have no idea and wonder what kind of HW changes 60 series introduces to counter this. Another Ada rebrand or Turing resource spam (Ampere) definitely isn't going to cut it, and it would be asinine for NVIDIA to keep bolting things unto Ampere for the third time. Hopefully we see a major architectural change on NVIDIA side.

It will be interesting to see if any of the PT stuff will even matter long term considering neural shading is on track to replace most if not all of the shading pipeline.

The only way I would buy an RDNA 5 AT0 is if it meets or beats my RTX 5090 for the same or less money. Also, the numbers are questionable from the "leak" that Tom from Moore's Law Is Dead keeps pushing that the AT0 with 154 Compute Units and 24GB of GDDR7 VRAM on the 3nm process running at ONLY 380W will beat the 5090 and be a contender with the RTX 6090. I find that difficult to believe given the TBP and AMD not being really power efficient relative to performance. Additionally, the RTX 5090 has 170 Streaming Multiprocessors which is the equivalent to a Compute Unit, so the RTX 5090 already is above what AMD's RDNA 5 AT0 is putting out when it comes to CUs vs SMs. I know the die shrink should have an IPC boost, but it all depends on if an increase from previous generations will translate to the upcoming generation.Regarding price, AMD will have to charge a premium because Radeon sells less than Geforce. Lowering the price won’t help much. Gamers are trained “Geforce” and AMD knows it.

If gamers get AT0, it is likely going to be priced higher than the 5090.

…and that is an “if”. A whole lot of companies are putting projects on hold due to the memory/storage insanity.

Yeah? 64CU N48 goes alright vs a 70SM GB203 chop. Might be another Factor here that helps it? Maybe a Factor that being a node of GB202 ahead could help Maximize.Additionally, the RTX 5090 has 170 Streaming Multiprocessors which is the equivalent to a Compute Unit,

A N48 chop is usually near the top of charts for power efficiency and notably VSync efficiency while hampered by obsolescent memory that has higher pj/bit transmit (poor RTX 5050, it's the least efficient Blackwell so far).AMD not being really power efficient relative to performance

The node ahead could be a factor, but I still don't think it will reduce power consumption to the level of 380W to produce better overall performance over the GB202.Yeah? 64CU N48 goes alright vs a 70SM GB203 chop. Might be another Factor here that helps it? Maybe a Factor that being a node of GB202 ahead could help Maximize.

I just joined because I want to question Kepler_L2 who is the purveyor of propaganda that MLID is peddling. Suffice it to say, I have an axe to grind with how much misinformation seems to spew from the AMD side - it's the equivalent of the "RTX 4090-level performance for $549". I just think that the rumor mills are what has led to a lot of discontentment in gamers who get fed false hope - like FSR 4 being ported to RDNA 3 and MLID and others in the TechTuber world who get people's hopes up.I don't know why I decided to respond to a 1 poster. The new theme makes it harder to discern. But we can't categorize RDNA5 configurations based on RDNA4 simplficiations and MLID's bogus TBP. But don't worry, it's not shipping outside of DC. We won't have to buy Radeon.

That's another bad assumption. It will sell for more than an RTX 5090 if they caught the customer they hunted. But not to us. There's no way you build a chip that big on the hope to sell it to DLSS enthusiasts.RTX 5090 for less money

You're probably right. If it is released to gamers, it would almost be legally actionable (possibly so for breach of fiduciary duty to shareholders) if AMD gave a more powerful, or even equally powerful, GPU to consumers for less money. If NVIDIA cannot maintain the enthusiast/high-end GPU consumer, then something definitely went awry at NVIDIA.That's another bad assumption. It will sell for more than an RTX 5090 if they caught the customer they hunted. But not to us. There's no way you build a chip that big on the hope to sell it to DLSS enthusiasts.

5090 like 4090 is hitting a massive scaling wall. Evident if you take TPU's averaged 4K raster native numbers and compare against game clock adjusted TFLOPS.Additionally, the RTX 5090 has 170 Streaming Multiprocessors which is the equivalent to a Compute Unit, so the RTX 5090 already is above what AMD's RDNA 5 AT0 is putting out when it comes to CUs vs SMs.

If they win, they charge more.and be competitive to above an RTX 5090 for less money

Piss easy, GB202 is dogwateringly bad.it will reduce power consumption to the level of 380W to produce better overall performance over the GB202.

True based on TPU numbers a 5090 is only like 30% faster than 4090 and most people were expecting it to be much faster.5090 like 4090 is hitting a massive scaling wall. Evident if you take TPU's averaged 4K raster native numbers and compare against game clock adjusted TFLOPS.

Vs a 5070 TI and 5080 it's -26% vs 5060 TI 16GB it's -35%. Functions like a GPU with 126 and 110.5 SMs respectively.

If they fix core scaling on RDNA 5 then game over for 5090.

But hey, good thing AMD did not release FSR4 for RDNA3 (or even some RDNA2), it may have been poorly received by the press. /s

The sad part is AMD actually has solid double digit share in DIY desktop and sales data reflects that.It's open season for press grilling:

PC graphics cards are now nearly 100 percent Nvidia

The latest analysis of the PC graphics-card market shows AMD following Intel's plunge in market share, ceding almost the entire PC graphics market to Nvidia.www.pcworld.com

It does not matter they're also mentioning the flawed Steam review results or some other fallacy that may come up in the near future, the end result is the same: the press can and will erode whatever mind share Radeon may still have with consumers.

But hey, good thing AMD did not release FSR4 for RDNA3 (or even some RDNA2), it may have been poorly received by the press. /s

While I think that Steam's HW Survey needs to be revised (a beta version of this HW self-reporting is currently available), I find it odd that neither AMD nor NVIDIA will give us hard sales numbers. I think it might be because it is hard to actually track where the GPUs ultimately land once they are sold to AIB partners. Surveys can only be so definitive. I know both sides go to the data sets that most favor their narrative. I will say that AMD did a good job with the mid-range with the 9000 Series. I wonder if they will revise their business strategy for RDNA 5 and scrap the AT0 flagship GPU for gaming to remain focused on the mid-range. I assume that would only happen if RAM demand and prices remain high.Steam HW study needs to be paused as it leads to all sorts of flawed perceptions