-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Question x86 and ARM architectures comparison thread.

Page 35 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

poke01

Diamond Member

All of this is pure BS.

- Optimized MacOS vs Windows (current a mess)

- 16k paging on ARM(Apple) vs 4k paging (x86, other ARM CPU's) (According to google 16k paging is 5-10% faster depending on workload, Link-https://android-developers.googleblog.com/2025/07/transition-to-16-kb-page-sizes-android-apps-games-android-studio.html)

- Better node for ARM(Apple, Qualcomm) which translate to lower power draw

- Not running the same binary

- Also lets not forget thing like if SoC==Benchmark Software's favourite brand than Score = Score+(5-10)%

Why? Apple CPUs were also tested on Linux and performance was the same or better. So go troll elsewhere

johnsonwax

Senior member

Pretty impressive they built that bias in 3 years before the M1 was even announced.Why not? For ideology or money anyone can do anything. SPEC consortium is not free of those.

poke01

Diamond Member

You can see the source code as well. So clearly that person is upset that x86 is losing.Pretty impressive they built that bias in 3 years before the M1 was even announced.

gdansk

Diamond Member

I will not. AMX units separate from the core is just as pointless to add to a CPU benchmark. Removing AES was great. Adding support for matrix accelerators separate from the core was a mistake. Nice way of nearly immediately making GB6 composite more dubious than it needed to be. But at least when Intel and AMD game their composite scores - the only bit reported by every "tech journalist" - will be perhaps more comparably bogus.Keep in AMD and Intel will introduce SME-like extension that is shared between cores to client in 2 years and Geekbench will support it. So all you x86 fans better shut up now about SME.

poke01

Diamond Member

Well you better get used to it. These companies will find always find ways to game benchmarks. I agree with you, its just always harping on about SME/AMX is annoying.I will not. AMX units separate from the core is just as pointless to add to a CPU benchmark. Removing AES was great. Adding support for matrix accelerators separate from the core was a mistake. Nice way of nearly immediately making GB6 composite more dubious than it needed to be. But at least when Intel and AMD game their composite scores - the only bit reported by every "tech journalist" - will be perhaps more comparably bogus.

MerryCherry

Member

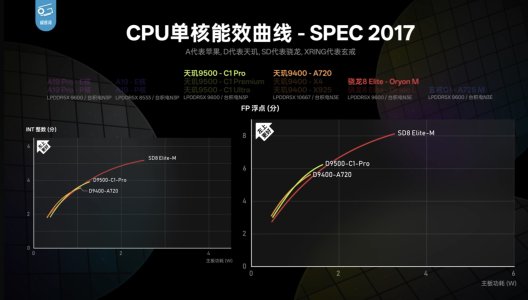

specifically the implementation by Xiaomi in their Xring O1 chip. They apparently used custom designed cell libraries and a bunch of other tricks.If nothing else that shows the Oryon 2 M being well handled by the A725.

Even the A19-E core isn't that much of an improvement over A725 in INT workloads, and A18-E is beaten by the A725 in both INT and FP!! 😮

Quite pleasantly surprised by that info!

The A725 in Mediatek isn't that good.

Last edited:

MerryCherry

Member

M2 and M3 are pretty old now, why is there only a single data point for each?

So why does the M4 review do the same thing? There's a line for the Qualcomm chip but no Apple chips. Are they trying to draw attention toward the 8 Gen3?

View attachment 140796

edit: and the iPhone 17 review video. All the Apple chips have a dot and all the Android chips have a line.

This probably has to do with the fact that they need to crack the OS to draw the lines (by setting custom clock speeds). The Android results were obtained by rooting the phones I believe, and the results for x86/X Elite was obtained through Linux/WSL. Jailbreaking iPhones is hard these days, and only M1/M2 have Asahi Linux support.I'm guessing he never wrote the script to get the data points to draw the curve.

I mean, if your focus is on x86/Android, then the x86/Android curves are useful and Apple Silicon is this 'oh yeah, there's also this thing which suggests what's possible'.

MTK seems to just coast on minimalist implementations.specifically the implementation by Xiaomi in their Xring O1 chip. They apparently used custom designed cell libraries and a bunch of other tricks.

The A725 in Mediatek isn't that good.

As for Xiaomi they are risking.... er.... people getting annoyed with them for being too good at their jobs going by the history with Huawei.

My problem is not the SME Cluster it's that they are part of ST benchmark that's about itKeep in AMD and Intel will introduce SME-like extension that is shared between cores to client in 2 years and Geekbench will support it. So all you x86 fans better shut up now about SME.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-