That honestly doesn't bode too well, because so far, only the even ones (2 and 4) were actually good.1/3/5/7 (every odd tock) is like that.

-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion RDNA 5 / UDNA (CDNA Next) speculation

Page 88 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

adroc_thurston

Diamond Member

I love superstitions but they just have a roadmap and crank it.That honestly doesn't bode too well, because so far, only the even ones (2 and 4) were actually good.

Also RDNA4 sucks balls for obvious reasons.

You mean the lack of halo, or is there something wrong with the uArch itself?Also RDNA4 sucks balls for obvious reasons.

I mean, 64 CUs with GDDR6 virtually matching (and sometimes beating) the 5070Ti is quite alright in my book.

They nearly caught up on a range of issues (V/f, PPW, RT, PT, AI/FSR, mem bw efficiency), and pretty much are slightly ahead on raster IPC for CU vs. SM.

RDNA3 vs. Ada looked FAR worse. Had NV wanted to, they could've priced AMD out of the market then and there.

adroc_thurston

Diamond Member

A bit of both.You mean the lack of halo, or is there something wrong with the uArch itself?

They cheaped out where they could.

Only in mobile and 3.5 fixed that anyway.RDNA3 vs. Ada looked FAR worse.

It is indeed a very good shader core; they really haven't shipped any bad ones anyway.They nearly caught up on a range of issues (V/f, PPW, RT, PT, AI/FSR, mem bw efficiency), and pretty much are slightly ahead on raster IPC for CU vs. SM.

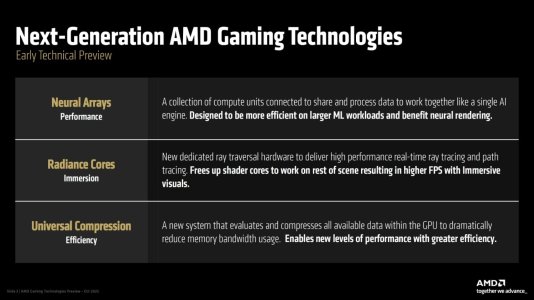

Hoho, somehow I missed the leak from Chiphell Forum. Just want to add the Universal Compression of RDNA5:

Double SP Per CU + Universal Compression, HOHO 😎a significant enhancement to reduce memory bandwidth needs by compressing virtually all graphics data, not just textures, enabling faster performance, lower memory requirements for 4K/higher gaming, and boosting upscaling tech like FSR. It works by applying compression across the entire GPU pipeline, leveraging increased silicon speed to overcome traditional performance costs, thereby increasing effective memory bandwidth and improving efficiency for next-gen gaming experiences.

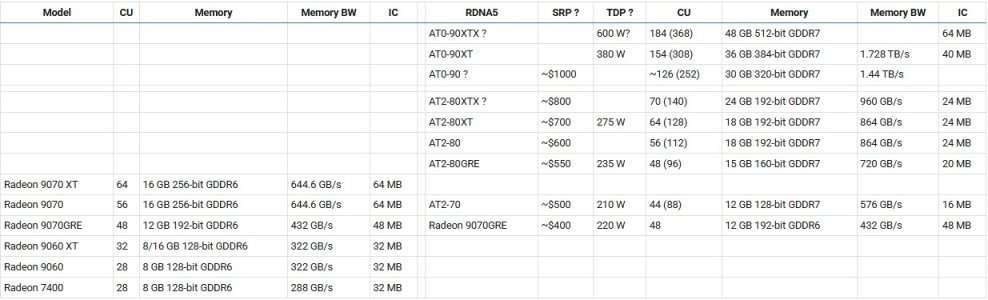

Above table including RDNA5 leaks from MLID with my speculated/missing SKUs with question mark?. As I said AT1 is being cancelled because AT2 full die with faster GDDR7 should be competitive with Rubin-70Ti. Oh yeh, all other OEMs have already implemented 128SP per CU; I really hope you guys do more homework before commenting. 😉

Microsoft is rather paying for 408mm2 wafer than custom designing their own APU cause development cost of 3nm APU costs hundreds of million. Only Sony with high sales volume is able to custom their APU with smaller die area: ~ 280mm2. Hoho, I let you guys think why do I mention these... 😎

One more hint: 7800XT (N32, 60CU) -> 9070XT (N48 XTX full die, 64CU). Why do AMD downgrade the N48 to 70 series? 😛

In conclusion, AMD has only prepared 2 RDNA5 dies: AT0 and AT2 for dGPU lineup and we should forget about mobile GPU lineup. Meanwhile AT3 and AT4 are being used for mGPU with CPU SoC which is selling in laptop for $1500 above, not cheap dGPU. Geez.

Anyhow, RDNA5 lineup going to be mighty impressive as I speculated in Soundwave thread: the Radiance core will be 3 times faster per core, thus AT2-80XT with 64CU's RT should be 2.x times faster than RDNA4's 9070XT (64CU) depending on the clock speed and features.

Last edited:

Win2012R2

Golden Member

Doing exactly what - RT?the Radiance core will be 3 times faster per core

Win2012R2

Golden Member

What makes you think they will be 3x faster per core - about the catch up they need to hit nvidia levels of RT?Yep, new term for RT cores.

What? Where's that coming from?

X3D parts need massively lower power to stay cool enough even under meh-ish cooling solutions, and iirc L3/VCache runs at the clock of the fastest core, so they limited PT and turbo clocks vs. the vanilla models.

Pretty sure that in terms of low leakage, the 5800X3D, 7800X3D and 9800X3D are better bins than most 5700X, 7700X and 9700X, respectively.

The 9850X3D has to be a better bin of course; it can probably just hit higher clocks at the same voltages, but like adroc said, they had 1.5 years of yield improvements and to accumulate top-bin chips for this SKU.

I don't think the objective was to achieve the lowest leakage. I think the objective was to reduce peak power (heat) which can be achieved by capping frequency and voltage, while using average or below average bins.

marees

Platinum Member

marees

Platinum Member

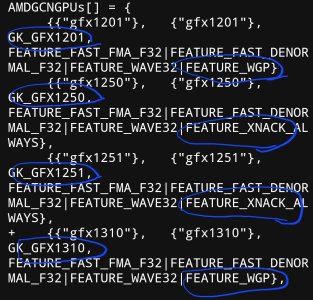

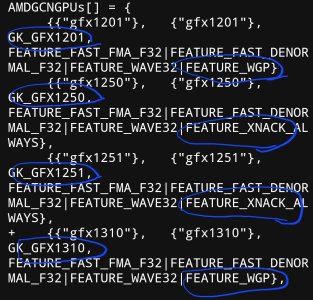

GFX1310

on XNACK

For users of GFX10/GFX11 GPUs from the RDNA series, unfortunately, XNACK is no longer supported. Only computing cards from the CDNA series has XNACK support, such as Instinct MI100 and MI200 - and they also belong to the GFX900 series.

Thus, use of Unified Shared Memory, which is the recommended practice and heavily used in SYCL programming, suffers from a serious hit. By not supporting XNACK on customer-grade desktop GPUs, AMD has essetially made a core feature in SYCL almost useless, forcing it to be an exclusive feature for datacenter users running CDNA cards with a price tag of $5000. This is unfortunate, but is something that developers who want to write cross-platform GPU code need to live with (for the highest performance, you may want to use manual data movements anyway, so it’s not all a loss, more on that later).

niconiconi.neocities.org

niconiconi.neocities.org

on XNACK

Supported Hardware

Not all GPUs are supported. Most GFX9 GPUs from the GCN series usually support XNACK, but only APU platforms enabled it by default. On dedicated graphics cards, it’s disabled by the Linux amdgpu kernel driver, possibly due to stability concerns as it’s still an experimental feature.For users of GFX10/GFX11 GPUs from the RDNA series, unfortunately, XNACK is no longer supported. Only computing cards from the CDNA series has XNACK support, such as Instinct MI100 and MI200 - and they also belong to the GFX900 series.

Thus, use of Unified Shared Memory, which is the recommended practice and heavily used in SYCL programming, suffers from a serious hit. By not supporting XNACK on customer-grade desktop GPUs, AMD has essetially made a core feature in SYCL almost useless, forcing it to be an exclusive feature for datacenter users running CDNA cards with a price tag of $5000. This is unfortunate, but is something that developers who want to write cross-platform GPU code need to live with (for the highest performance, you may want to use manual data movements anyway, so it’s not all a loss, more on that later).

What is XNACK on AMD GPUs, and How to Enable the Feature

On AMD GPUs, the feature XNACK is essential for running HIP code with Managed Memory. But what XNACK does, or how can it be enabled, is poorly documented. I believe this article is the only comprehensive guide on the entire Web.

branch_suggestion

Senior member

Probably not enough info yet to zero in the exact product.GFX1310

View attachment 137171

on XNACK

Supported Hardware

Not all GPUs are supported. Most GFX9 GPUs from the GCN series usually support XNACK, but only APU platforms enabled it by default. On dedicated graphics cards, it’s disabled by the Linux amdgpu kernel driver, possibly due to stability concerns as it’s still an experimental feature.

For users of GFX10/GFX11 GPUs from the RDNA series, unfortunately, XNACK is no longer supported. Only computing cards from the CDNA series has XNACK support, such as Instinct MI100 and MI200 - and they also belong to the GFX900 series.

Thus, use of Unified Shared Memory, which is the recommended practice and heavily used in SYCL programming, suffers from a serious hit. By not supporting XNACK on customer-grade desktop GPUs, AMD has essetially made a core feature in SYCL almost useless, forcing it to be an exclusive feature for datacenter users running CDNA cards with a price tag of $5000. This is unfortunate, but is something that developers who want to write cross-platform GPU code need to live with (for the highest performance, you may want to use manual data movements anyway, so it’s not all a loss, more on that later).

What is XNACK on AMD GPUs, and How to Enable the Feature

On AMD GPUs, the feature XNACK is essential for running HIP code with Managed Memory. But what XNACK does, or how can it be enabled, is poorly documented. I believe this article is the only comprehensive guide on the entire Web.niconiconi.neocities.org

It's AT0 or AT2Probably not enough info yet to zero in the exact product.

branch_suggestion

Senior member

Makes sense yeah, there has been no consistent pattern so it could be either.It's AT0 or AT2

Then what of CDNA6 and Orion/Canis?

gfx1350CDNA6

gfx1300/1301Orion/Canis

MrMPFR

Senior member

More gfx13 in LLVM: https://github.com/llvm/llvm-project/pulls?q=gfx13+

No idea what any of it means except it looks like all the ISA stuff for now is just a placeholder.

No idea what any of it means except it looks like all the ISA stuff for now is just a placeholder.

Last edited:

marees

Platinum Member

i understand LLVM releases are once in 6 months. so if AMD misses this deadline then the next one is 6 months away. is that correct ?More gfx13 in LLVM: https://github.com/llvm/llvm-project/pulls?q=gfx13+

No idea what any of it means except it looks like all the ISA stuff for now is just a placeholder.

MrMPFR

Senior member

Very interesting AMD/Xilinx patent: https://patentscope.wipo.int/search/en/detail.jsf?docId=US471590844

Is this in RDNA5?

Is this in RDNA5?

adroc_thurston

Diamond Member

No.Very interesting AMD/Xilinx patent: https://patentscope.wipo.int/search/en/detail.jsf?docId=US471590844

Is this in RDNA5?

Forget about patent stuff.

marees

Platinum Member

not sure if xilinx stuff comes to rOCM or Instinct/radeon stuffVery interesting AMD/Xilinx patent: https://patentscope.wipo.int/search/en/detail.jsf?docId=US471590844

Is this in RDNA5?

what AMD has said is that they can do the equivalent of strix halo on xilinx — if needed. this was in response to competition from qualcomm & the likes on edge inference

marees

Platinum Member

RGT on L1 cache pooling/sharing & reduced L2 cache & lack of infinity cache

LoL, every Radeon generation was supposed to be Nvidia's nightmare 😀 🙄RGT on L1 cache pooling/sharing & reduced L2 cache & lack of infinity cache

marees

Platinum Member

youtube commentRGT on L1 cache pooling/sharing & reduced L2 cache & lack of infinity cache

Introduction of shared L1 cache organization effectively eliminates data replication across GPU cores, improving collective hit rate and efficiency at the cost of increased latency for certain workloads (addressed by new mechanisms), and die area. Basically, net improvement in peak throughput.

MrMPFR

Senior member

This has nothing to do with ROCm and patent has broad applicability. It's the ML data format architecture to rule them all. No more only using FP8 or some other format for training and inference. Mix and match everything and metadata guided content adaptive data arrays combined with upcast circuitry that can convert the data into a different format or a higher precision format as needed depending on the workload.not sure if xilinx stuff comes to rOCM or Instinct/radeon stuff

what AMD has said is that they can do the equivalent of strix halo on xilinx — if needed. this was in response to competition from qualcomm & the likes on edge inference

ML architecture during training and inference could leverage any combination of the following (just examples) formats, ensuring maximum accuracy, speed, and minimal to no underflow: INT4, INT8, BFP4, FP4, FP8, FP32, MXFP4, MXFP8, MXFP16, and MXFP32.

From what I can read it should be almost no work for LLM and ML devs. Data arrays can likely be automatically tagged depending on the data in question based on the amount of dynamic range. Low dynamic range use INT for maximum precision whle high dynamic ranges can use FP.

Critical data prone to quantization error with both FP and INT can use microscaled FP or even better AMDFP4 (MXFPx 2.0/NVFP4 clone). If that's not good enough or devs wants to spend precision where it counts, use either higher format or microscaling at higher format (e.g. MXFP8).

So the idea is that precision critical passes on a ML can use microscaling or higher precision, which is very select parts of the ML, while the rest can go everything else can go as low as it wants. For some portions of the ML model FP2 and INT2 might even be worthwhile using.

Edit: Rewrite to specify implementation better. + added new info about how this impacts ML models.

Last edited:

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-