DisEnchantment

Golden Member

Speculate at will

Last edited:

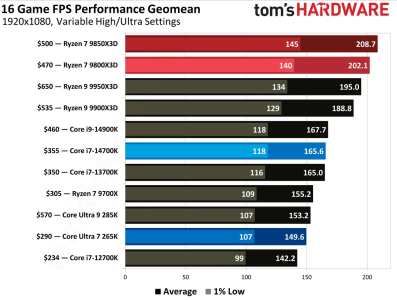

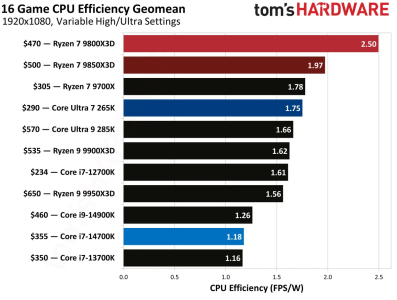

Just watched the GN review as well. The new part was not interesting, but I notice that Z4 vs Z5 (both vanilla) is still highly confusing, Z5 outperforms on average but Z4 will have better minimum frame rates in some games, as well as faster in some synthetic tests. I thought we'd have more conclusive answers by now and that Z5 would age better. Maybe it will in another 2 or 4 years with more software updates...9850X3D Reviewed:

AMD Ryzen 7 9850X3D review: The world's fastest gaming processor, again

3% more performance, 30% more power; the Ryzen 7 9850X3D's victories feel hollow.www.tomshardware.com

”3% more performance, 30% more power”

Let’s hope 9950X3D2 performs better.

What software change, other than compiling specifically for znver5 which is never happening on Windows, did you suppose would benefit Zen 5 more than Zen 4?Maybe it will in another 2 or 4 years with more software updates...

”3% more performance, 30% more power”

Let’s hope 9950X3D2 performs better.

So you think 3% perf improvement for 30% more power and higher price is fine/good? Not sure if serious.

If I wanted to spend $1500 on an ugly plastic brick with poor battery life, why wouldn't I get something with a dGPU instead?posted on pantherlake thread with more detail,

you can get Halo 392 full laptop 'ASUS TUF' for around 1500 bucks now

pantherlake machines START from 1500, up to 2500. DOA

yes , lets remember context , this is a new BIN/ SKU , it is not a new part. remember back in like 2002 when AMD and intel would release new bins like almost every 3 months...... this is just that.......So you think 3% perf improvement for 30% more power and higher price is fine/good? Not sure if serious.

This is cool and all, but if you think people complaining about 9850X3D being pointless is annoying, get ready to grab some popcorn and buckle in if this ever drops... 😵😵

Exactly why I don't think it's worth it. Who will even notice 3% perf improvement, unless you're gunning for benchmark bragging rights.Unless you were born yesterday, you would know that the last 1% of performance is most costly in terms of $ and Watts.

why didn't AMD move Gorgon point to N3P. Is there not a easy migration between N4P and N3P.

Not easy, not design compatible.why didn't AMD move Gorgon point to N3P. Is there not a easy migration between N4P and N3P.

It's a full node with very different design rules.why didn't AMD move Gorgon point to N3P. Is there not a easy migration between N4P and N3P.

...and a crapload of money.It's a full node with very different design rules.

Doing a full IP port for a nothingburger part made of old IP is just a waste of time.

Not a money problem; AMD's rich....and a crapload of money.

It will be the exact people that have been clamouring for it. All so predictableThis is cool and all, but if you think people complaining about 9850X3D being pointless is annoying, get ready to grab some popcorn and buckle in if this ever drops... View attachment 137558😵😵

Of course. All the same. Always.It will be the exact people that have been clamouring for it. All so predictable

Exactly why I don't think it's worth it. Who will even notice 3% perf improvement, unless you're gunning for benchmark bragging rights.

I don’t want an ASUS tuf. What now?you can get Halo 392 full laptop 'ASUS TUF' for around 1500 bucks now

why are they porting RDNA3.5 to N3 then?A straight port of Strix Point to N3P would have netted next to nothing of importance. They would have gotten maybe another 200Mhz of clock speed over Gorgon Point with the same exact logic. The RDNA 3.5 iGPU might have gained 5% peak clock over Gorgon Point. The die would have shrunk by, what, 10% for a net increase in per chip cost. Fully loaded power draw would have maybe gone down a few percent. IT wouldn't be worth it without substantive changes that would require a new floorplan. Once you start to mess with the floorplan, costs go up dramatically. If they had REALLY wanted, they could have done an optimized shrink that made the minor logic changes to get the best performance from the new node as well as changing the floorplan to accommodate a 16MB MALL cache. Doing a MALL cache like that, gaining 400 Mhz in iGPU clock speed and supporting a couple bins higher in LPDDR5X RAM would have gone a LONG way to bringing the iGPU up to Panther Lake performance levels. Maybe not beat it, but the difference wouldn't be compelling in any way. The extra few hundred MHZ of ST boost speed would have kept it ahead of PTL in most every benchmark, and the MT performance would see a notable uplift. But, BUT, none of that would have justified the increase in costs and wouldn't have done much for market volume.

In other words, a waste of money. Save the money and sell what you already have while you work on something MUCH better.