-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Question x86 and ARM architectures comparison thread.

Page 35 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

poke01

Diamond Member

All of this is pure BS.

- Optimized MacOS vs Windows (current a mess)

- 16k paging on ARM(Apple) vs 4k paging (x86, other ARM CPU's) (According to google 16k paging is 5-10% faster depending on workload, Link-https://android-developers.googleblog.com/2025/07/transition-to-16-kb-page-sizes-android-apps-games-android-studio.html)

- Better node for ARM(Apple, Qualcomm) which translate to lower power draw

- Not running the same binary

- Also lets not forget thing like if SoC==Benchmark Software's favourite brand than Score = Score+(5-10)%

Why? Apple CPUs were also tested on Linux and performance was the same or better. So go troll elsewhere

johnsonwax

Senior member

Pretty impressive they built that bias in 3 years before the M1 was even announced.Why not? For ideology or money anyone can do anything. SPEC consortium is not free of those.

poke01

Diamond Member

You can see the source code as well. So clearly that person is upset that x86 is losing.Pretty impressive they built that bias in 3 years before the M1 was even announced.

gdansk

Diamond Member

I will not. AMX units separate from the core is just as pointless to add to a CPU benchmark. Removing AES was great. Adding support for matrix accelerators separate from the core was a mistake. Nice way of nearly immediately making GB6 composite more dubious than it needed to be. But at least when Intel and AMD game their composite scores - the only bit reported by every "tech journalist" - will be perhaps more comparably bogus.Keep in AMD and Intel will introduce SME-like extension that is shared between cores to client in 2 years and Geekbench will support it. So all you x86 fans better shut up now about SME.

poke01

Diamond Member

Well you better get used to it. These companies will find always find ways to game benchmarks. I agree with you, its just always harping on about SME/AMX is annoying.I will not. AMX units separate from the core is just as pointless to add to a CPU benchmark. Removing AES was great. Adding support for matrix accelerators separate from the core was a mistake. Nice way of nearly immediately making GB6 composite more dubious than it needed to be. But at least when Intel and AMD game their composite scores - the only bit reported by every "tech journalist" - will be perhaps more comparably bogus.

MerryCherry

Member

specifically the implementation by Xiaomi in their Xring O1 chip. They apparently used custom designed cell libraries and a bunch of other tricks.If nothing else that shows the Oryon 2 M being well handled by the A725.

Even the A19-E core isn't that much of an improvement over A725 in INT workloads, and A18-E is beaten by the A725 in both INT and FP!! 😮

Quite pleasantly surprised by that info!

The A725 in Mediatek isn't that good.

Last edited:

MerryCherry

Member

M2 and M3 are pretty old now, why is there only a single data point for each?

So why does the M4 review do the same thing? There's a line for the Qualcomm chip but no Apple chips. Are they trying to draw attention toward the 8 Gen3?

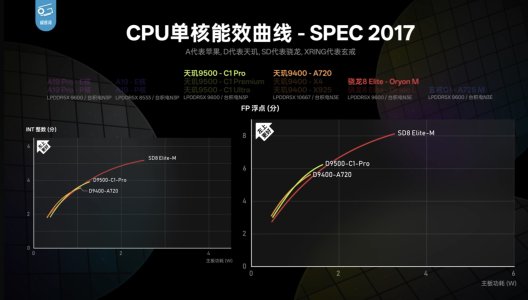

View attachment 140796

edit: and the iPhone 17 review video. All the Apple chips have a dot and all the Android chips have a line.

This probably has to do with the fact that they need to crack the OS to draw the lines (by setting custom clock speeds). The Android results were obtained by rooting the phones I believe, and the results for x86/X Elite was obtained through Linux/WSL. Jailbreaking iPhones is hard these days, and only M1/M2 have Asahi Linux support.I'm guessing he never wrote the script to get the data points to draw the curve.

I mean, if your focus is on x86/Android, then the x86/Android curves are useful and Apple Silicon is this 'oh yeah, there's also this thing which suggests what's possible'.

MTK seems to just coast on minimalist implementations.specifically the implementation by Xiaomi in their Xring O1 chip. They apparently used custom designed cell libraries and a bunch of other tricks.

The A725 in Mediatek isn't that good.

As for Xiaomi they are risking.... er.... people getting annoyed with them for being too good at their jobs going by the history with Huawei.

My problem is not the SME Cluster it's that they are part of ST benchmark that's about itKeep in AMD and Intel will introduce SME-like extension that is shared between cores to client in 2 years and Geekbench will support it. So all you x86 fans better shut up now about SME.

Nothingness

Diamond Member

SVE2 wouldn't bring magical improvements to SIMD performance unless vector length is at least 256-bit. And this is were Arm dropped the ball: why should a SW dev bother with supporting SVE when there's no significant improvement over NEON? They should have tried at least a half rate 256-bit implementation to kickstart SVE adoption, AMD half rate AVX-512 has shown this can still bring nice speedups over AVX2. IMHO this is a missed opportunity to SVE adoption.Could it be because they have no proper SVE2 support?

Thing is that’s an easy fix if Apple ever wanted to take vector workloads seriously. Their user base is mostly MacBook users and I bet less than 1% need SVE2 support.

The problem is that most Arm designed cores still target markets where workloads that need high vector performance are better served by dedicated IP (even Apple). And Arm isn't large enough a company to design multiple cores with vastly different microarchitectures to address all markets (even Intel and AMD don't do this).Now as to why Neoverse doesn’t have parity with AMD is because ARM is lead by idiots when it comes to server.

Nothingness

Diamond Member

You're at the very least 5 years late to the party. It was funny to read x86 fanatics claim that Arm/Apple was only good at making phone/tablet chips and that they would not be competitive in other markets. They had at least the excuse that no concrete evidence existed yet, an excuse you don't have.Another flawed benchmark and result. Apple CPU's power draw should be 2x the reading from the Apple software reading. So those 7W and 9W for M1 and M5 should be 14W and 18W. Still good but not that impressive when using real power draw number. Also forgetting TSMC 3nm vs 4nm?

And many of those ARM vs x86 benchmark are really apple (fruit) vs orange (fruit), where ARM(Apple) has huge advantage vs x86. Like

When you subtract those advantage for ARM (Apple) x86 is not that behind.

- Optimized MacOS vs Windows (current a mess)

- 16k paging on ARM(Apple) vs 4k paging (x86, other ARM CPU's) (According to google 16k paging is 5-10% faster depending on workload, Link-https://android-developers.googleblog.com/2025/07/transition-to-16-kb-page-sizes-android-apps-games-android-studio.html)

- Better node for ARM(Apple, Qualcomm) which translate to lower power draw

- Not running the same binary

- Also lets not forget thing like if SoC==Benchmark Software's favourite brand than Score = Score+(5-10)%

So in conclusion, most ARM (Apple) vs x86 comparison is flawed. It is best case scenario for ARM (Apple) and worst case scenario for x86.

I won't go through each point (though the "not running the same binary" is very funny), but the power one is interesting. It's been proven that the SW power metrics of Apple are not very accurate. But I guess it's partly the same for x86 machines. The only way to make a fair comparison would be to use HW probes, something most reviewers don't have the luxury to do.

Server CPUs takes longer to validate as well you can't do that yearly unless you have lots of MoneyThe problem is that most Arm designed cores still target markets where workloads that need high vector performance are better served by dedicated IP (even Apple). And Arm isn't large enough a company to design multiple cores with vastly different microarchitectures to address all markets (even Intel and AMD don't do this).

gdansk

Diamond Member

They can release new server platforms 'yearly' if you have enough designs in validation simultaneously.

It was simply thought to be not worth it since server upgrade cycles aren't that short. But if you're building AGI CPUs maybe everything goes out the window. With infinite return on investment everything looks doable. In space.

It was simply thought to be not worth it since server upgrade cycles aren't that short. But if you're building AGI CPUs maybe everything goes out the window. With infinite return on investment everything looks doable. In space.

The Intel laptops are pretty accurate. Microsoft even says RAPL(software derived one) is really very similar to hardware measurements. Intel uses the same metric for their power management, so does tester utilities like HWInfo.I won't go through each point (though the "not running the same binary" is very funny), but the power one is interesting. It's been proven that the SW power metrics of Apple are not very accurate. But I guess it's partly the same for x86 machines. The only way to make a fair comparison would be to use HW probes, something most reviewers don't have the luxury to do.

Anyways if you really wanted to measure power, you can measure from AC, time drain to battery, or heck use a multimeter and solder connections.

There may be some vertically integrated advantages there, but the advantages Apple has is so big, it makes it look like a drop in the bucket.So in conclusion, most ARM (Apple) vs x86 comparison is flawed. It is best case scenario for ARM (Apple) and worst case scenario for x86.

This is like when Nvidia would put out new drivers a month after ATI would a new card and the new driver would have some spiffy feature that boosted performance enough to make new ATI parts relevant(like that Lightspeed memory driver). But when ATI came out with their R300, Nvidia could no longer pull such things since R300 was far superior to whatever Nvidia had.

Or more recent examples like Xe3 on Pantherlake where people are like "what about drivers, and iso process?" Well, Xe3 is N3E vs N3B on Lunarlake so it's basically a sidegrade in process, you are talking mid single digit % gains. And the ~50% gain at same TDP over competition is way more than N3E is over N4.

The few % advantages Apple may have is dwarfed by real uarch advantages that Apple has, not necessarily the ISA. You could say INDIRECTLY ARM has advantage over x86, because all the mindset is at ARM, people *think* x86 is going to die, and you have the top engineers going there. But it's not apples-to apples.

Covfefe

Member

Geekerwan apparently replaces the device's battery with a power monitor for phones (timestamped video). Not sure if he does the same for laptops or not.but the power one is interesting. It's been proven that the SW power metrics of Apple are not very accurate. But I guess it's partly the same for x86 machines. The only way to make a fair comparison would be to use HW probes, something most reviewers don't have the luxury to do.

Either way, his numbers line up pretty close with wall power measurements I've seen elseware. In notebookcheck's MacBook Pro 14 M4 (non-pro) review, they measured cinebench single-core power draw at 5.2 watts in powermetrics CPU power, and 11.9 watts when measured at the wall.

near 7W is lot of difference considering there is no screen power consumption, so the CPU Power reading is off. There is only SoC/SSD/WiFi and MotherBoard to powerGeekerwan apparently replaces the device's battery with a power monitor for phones (timestamped video). Not sure if he does the same for laptops or not.

Either way, his numbers line up pretty close with wall power measurements I've seen elseware. In notebookcheck's MacBook Pro 14 M4 (non-pro) review, they measured cinebench single-core power draw at 5.2 watts in powermetrics CPU power, and 11.9 watts when measured at the wall.

Last edited:

Jan Olšan

Senior member

AFAIK AMD's package power reading should also be fairly accurate. Not sure about Intel who are likely also upping their game, but AMD CPUs should have actual sensors (in large numbers) actually monitoring the power that goes in, it's not like Apple's theoretical model based estimation (where it's a question how close to reality it is at the moment).The Intel laptops are pretty accurate. Microsoft even says RAPL(software derived one) is really very similar to hardware measurements. Intel uses the same metric for their power management, so does tester utilities like HWInfo.

(They are processor-level sensors so they don't include VRM losses).

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-