-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Question x86 and ARM architectures comparison thread.

Page 31 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

poke01

Diamond Member

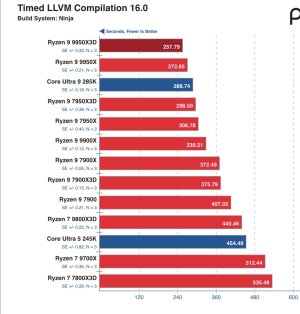

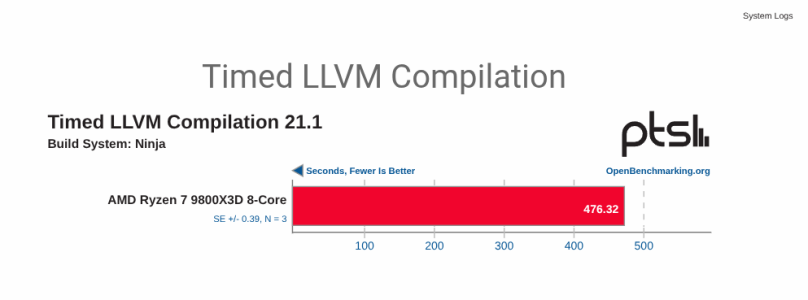

Btw the compliers aren’t comparable. GCC 16 didn’t even exist when this was tested.View attachment 136670

GCC 13 vs GCC 16 + Clang 16 oof

It should just say Xcode 16.1 or just apple clang 16.1, don’t know why Michael adds the misleading stuff

sure but clang 16 is 2024 GCC 13 is like 2022 I doubt changes for Zen5/ARL would be in GCC 13 he would have been better off using a LLVM Based compiler for doing testing of around the same timeframe would have been a more fair comparison and if he is using Apple propritery compiler than Intel/AMD might as well use their compiler toolchain.Btw the compliers aren’t comparable. GCC 16 didn’t even exist when this was tested.

It should just say Xcode 16.1 or just apple clang 16.1, don’t know why Michael adds the misleading stuff

poke01

Diamond Member

Here is a newer GCC 14.2, it’s faster than 13.2. Michael didn’t test FFMPEG compilation in this review but we can compare LLVM compilation.sure but clang 16 is 2024 GCC 13 is like 2022 I doubt changes for Zen5/ARL would be in GCC 13 he would have been better off using a LLVM Based compiler for doing testing of around the same timeframe would have been a more fair comparison and if he is using Apple propritery compiler than Intel/AMD might as well use their compiler toolchain.

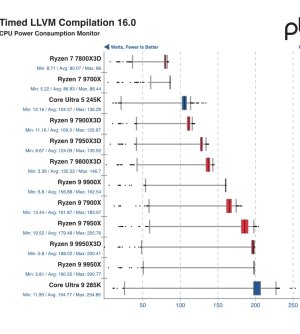

Also these don’t look like wall readings to me.

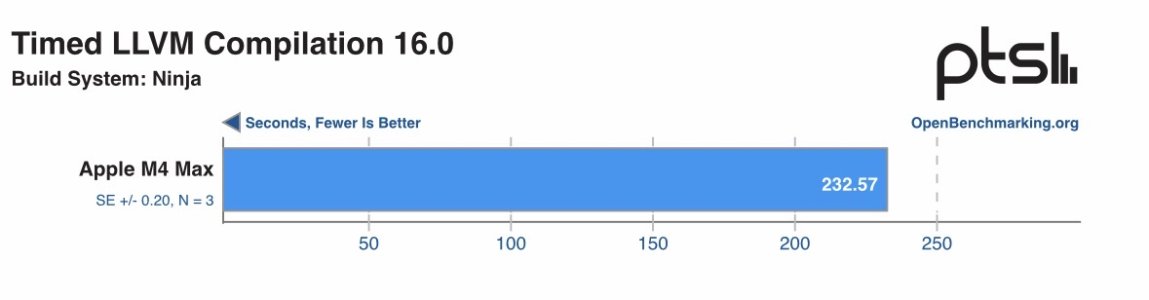

And here is the M4 Max in a MacBook.

M4Max Benchmarks [2503281-NE-M4MAX949371] - OpenBenchmarking.org

I’m going to test my own 9800X3D on LLVM and see the time difference.

Last edited:

Because he doesn't care about the fine details, but scale and automation, that is why when you scrutinize the benchmarks from phoronix you will see all kinds of inconsistencies😉 I guess on MacOS simply gcc aliases clang for convenience.It should just say Xcode 16.1 or just apple clang 16.1, don’t know why Michael adds the misleading stuff

Support for Zen5 in mainstream LLVM is still a joke. It's copy paste of Zen4 backend which itself only recently got fixed and was a copy paste of Zen3. AMD is dropping a ball there.I doubt changes for Zen5/ARL would be in GCC 13 he would have been better off using a LLVM Based compiler for doing testing of around the same timeframe would have been a more fair comparison and if he is using Apple propritery compiler than Intel/AMD might as well use their compiler toolchain.

Michael didn’t test FFMPEG compilation in this review but we can compare LLVM compilation.

Be sure to build the same target as by default each will compile for the same architecture it's running on, which will trigger different code/data paths in the compiler😉 Make sure to match the same options, and depending on the platform use the right compiler package 😉 [As in my table, depending on the package source there was large diff between 18.1.8 clang versions].I’m going to test my own 9800X3D on LLVM and see the time difference.

Covfefe

Member

Software readings for everything. Here's the review.How did they measure power for the x86 machines? As I previously wrote, I only trust power at the wall, after all this is what the machines I run consume.

The M4 showing was all the more impressive when looking at the CPU power consumption exposed by powermetrics compared to the Intel/AMD RAPL/PowerCap results on Linux.

Nothingness

Diamond Member

Correct. If one wants a real gcc, it can be installed via homebrew and be used this way:I guess on MacOS simply gcc aliases clang for convenience.

$ gcc-15 --version

gcc-15 (Homebrew GCC 15.2.0) 15.2.0

$ gcc --version

Apple clang version 17.0.0 (clang-1700.6.3.2)

poke01

Diamond Member

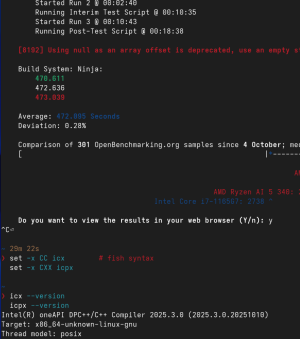

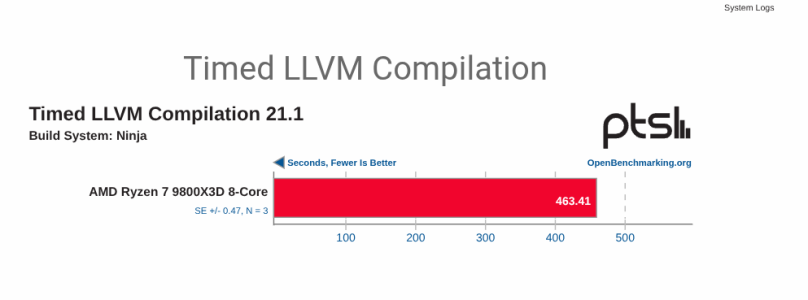

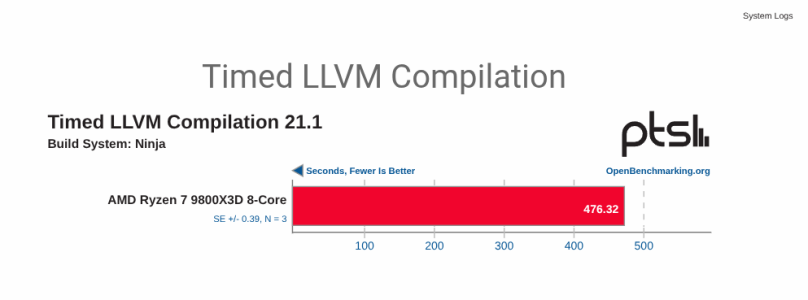

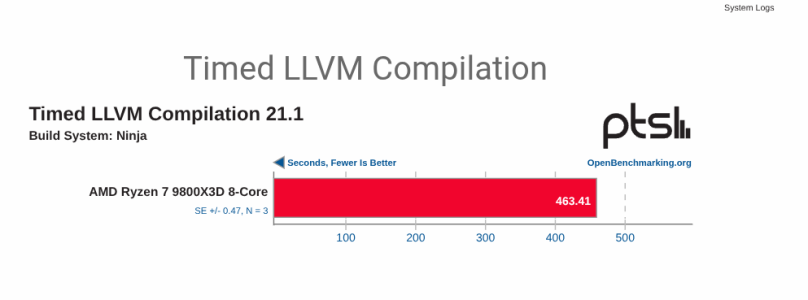

Tested on undervolted AMD 9800X3D. Power consumption in btop was 95w with a peak of 110w. @MS_AT @511

Latest clang 21.1.6

openbenchmarking.org

openbenchmarking.org

Latest GCC 15.2.1

openbenchmarking.org

openbenchmarking.org

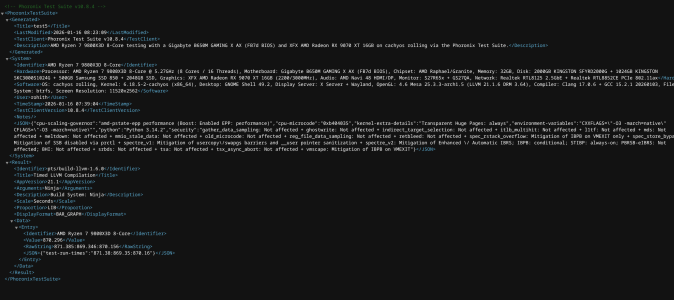

oh and AMD's complier is pure dogwater. Its based on clang 17.0.6.

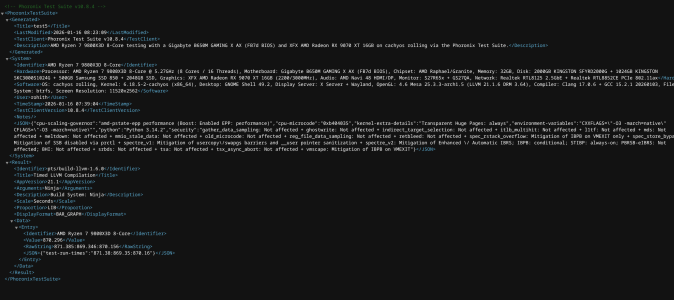

PTS crashed after it finished but so no web link but I managed a pic of the XML file.

It took 870 seconds, double the other compliers

Latest clang 21.1.6

Test4 Benchmarks [2601158-NE-TEST4834135] - OpenBenchmarking.org

Latest GCC 15.2.1

Test2 Benchmarks [2601151-NE-TEST2270825] - OpenBenchmarking.org

oh and AMD's complier is pure dogwater. Its based on clang 17.0.6.

PTS crashed after it finished but so no web link but I managed a pic of the XML file.

It took 870 seconds, double the other compliers

I would expect it to be the slowest, after all it's supposed to run extra optimization passes so the binary it produces is faster, I doubt anyone pays a lot of attention to how fast it itself is running.oh and AMD's complier is pure dogwater. Its based on clang 17.0.6.

I guess this was distribution clang not official clang? Since the latest clang is 21.1.8. I am not sure how cachy is sourcing it, if they are building from scratch. Also do you know how can I read from openbenchmarking the exact command used to build? I wasn't able to find it.Latest clang 21.1.6

That is surprisingly good result, I wonder if cachy is doing the sane thing and they have ditched ld underneath in favour of lld. Or maybe it's the other way around and the build defaults to system linker, which by default should be ld, so both are using the slow linker, hmm. I am too unfamiliar with Linux to be able to do more than guess😉Latest GCC 15.2.1

Well you can run icx telling it to compile for znver5 outright, it's llvm based after all😉 [it's another thing altogether that AMD still did not manage to merge proper scheduler data into upstream llvm]. https://godbolt.org/z/Ts6TxPef4I Know of a funny stuff you can use Intel's compiler and pass generic flag 🤣.(like -O2 -x86_64_V3) or something depending on what you passed those compiler

I just don't know Intel still does Fancy AMD bottlenecking in their Compilers this might get rid of itWell you can run icx telling it to compile for znver5 outright, it's llvm based after all😉 [it's another thing altogether that AMD still did not manage to merge proper scheduler data into upstream llvm]. https://godbolt.org/z/Ts6TxPef4

poke01

Diamond Member

phoronix-test-suite install pts/build-llvm to installhow can I read from openbenchmarking the exact command used to build?

phoronix-test-suite run pts/build-llvm-1.6.0 to run

And select 1 when it asks you to choose for Ninja

Their own compiler is deprecated, I mean icc. Their new compiler (icx) is tuned llvm with extra optimization passes for Intel hardware as far as I understand. So it does not cripple AMD chips the way the old one used to do. It's just not applying extra passes, but the generic tunings apply to AMD chips too. Mystical is using icx to build Y-cruncher for Zen, or at least used to last time I checked and he found it the best available at the time for the purpose.I just don't know Intel still does Fancy AMD bottlenecking in their Compilers this might get rid of it

Ah , I guess I was not precise enough, I mean the exact cmake command used to run the build itself, but I think these can be inferred from pts sources 🙂 Thanks anyway🙂phoronix-test-suite install pts/build-llvm to install

phoronix-test-suite run pts/build-llvm-1.6.0 to run

ICX is arguably the best compiler for x86_64 so I am not really surprising here but I just was not sure regarding AMD paths thanks for lmk.Their own compiler is deprecated, I mean icc. Their new compiler (icx) is tuned llvm with extra optimization passes for Intel hardware as far as I understand. So it does not cripple AMD chips the way the old one used to do. It's just not applying extra passes, but the generic tunings apply to AMD chips too. Mystical is using icx to build Y-cruncher for Zen, or at least used to last time I checked and he found it the best available at the time for the purpose.

Why do you think so? I mean the benchmark results presented here do not show, in my opinion, enough data to confirm or deny this claim.Well looks like the optimization is for Intel only stuff

1. ICX took longer to compile LLVM (clang) than LLVM (clang) did, but we do not know how fast the compiled binary is. The extra time can come from more optimization passes than vanilla clang is doing. So it might be it's actually applying extra optimization what makes it slower.

2. We do not know how icx was built. Was it built by icx, or by gcc or by clang. This can influence the performance of icx. For example the "default" way for building clang release, at least the one I remember, was using 3 phases. 1) you build clang with system compiler, whatever it might be what gives you clang_1. 2) You use clang_1 to compile clang gathering profile information for profile guided optimization. 3) You produce clang_2 using clang_1 consuming PGO data from 2). Then clang_2 is the final release artifact. The influence of that process can be seen in my previous posts, where the difference between 18.1.8 was down to memory allocator used and if pgo was applied or not.

In other words, your statement might be of course true, but based on this thread alone I don't think we have sufficient evidence to back this up😉

Last edited:

I am dumb I mistook it for runtime my badWhy do you think so? I mean the benchmark results presented here do show, in my opinion, enough data to confirm or deny this claim.

johnsonwax

Senior member

Looks like Apple won the compiler wars.Intel compiler is faster than GCC but mainline clang is the best complier

poke01

Diamond Member

GB10 vs Strix Halo cpu

wajeehakhan

Junior Member

From my understanding, ARM cores are extremely efficient in single-threaded tasks under low power, which makes them great for laptops and mobile devices. On the other hand, x86 cores still shine in multi-threaded workloads and server environments.

I think both architectures have their strengths depending on the use case, and it’s exciting to see innovation from both sides. Looking forward to seeing how efficiency and performance evolve in the coming years!

I think both architectures have their strengths depending on the use case, and it’s exciting to see innovation from both sides. Looking forward to seeing how efficiency and performance evolve in the coming years!

cytg111

Lifer

I dont want to start a new thread for something that might be meh, so this is not x86 nor ARM, the question is: Is it comparable? Q.ANT.

As I understand it it's not a phantom product, it's rolling through fabs right now ... photonic computing and wipes out anything Blackwell /Tubin.

As I understand it it's not a phantom product, it's rolling through fabs right now ... photonic computing and wipes out anything Blackwell /Tubin.

Nvidia has the AI strangle on a GPU, which is programmable and much more general purpose than a specialized accelerator like that. Proprietary and specialized accelerators are always faster but usually it's the general purpose that takes a greater share, unless the accelerators are at least an order of magnitude faster. They went from gaming and then to workstation 3D, and then to crypto mining, and when that popped they went to AI. GPUs find a different niche while special purpose ones are a dead end.I dont want to start a new thread for something that might be meh, so this is not x86 nor ARM, the question is: Is it comparable? Q.ANT.

As I understand it it's not a phantom product, it's rolling through fabs right now ... photonic computing and wipes out anything Blackwell /Tubin.

It's the computer equivalent of a person only being able to do one thing good. The world/trend changes and the person is out of a job.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-