-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Question x86 and ARM architectures comparison thread.

Page 32 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

adroc_thurston

Diamond Member

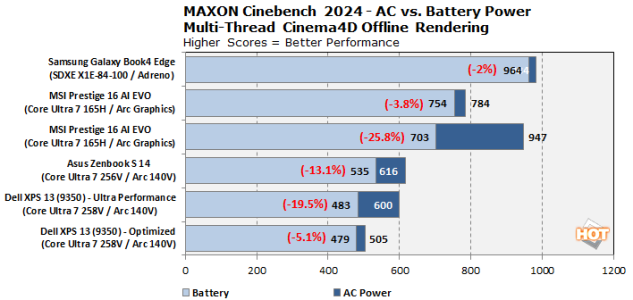

People benching nT perf on battery aren't serious and should consider different life decisions.

MaxTech did some benchmarks with Panther Lake. I will post the relevant screenshots here, so you all don't need listen to the hyper voice every 2 seconds.

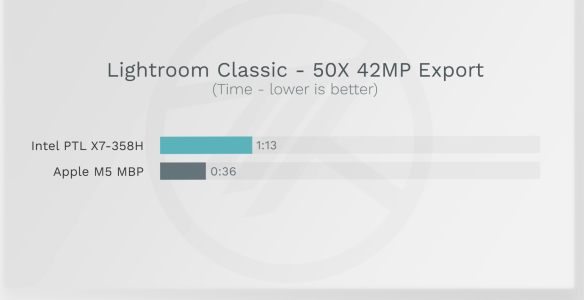

Lightroom:

View attachment 138344

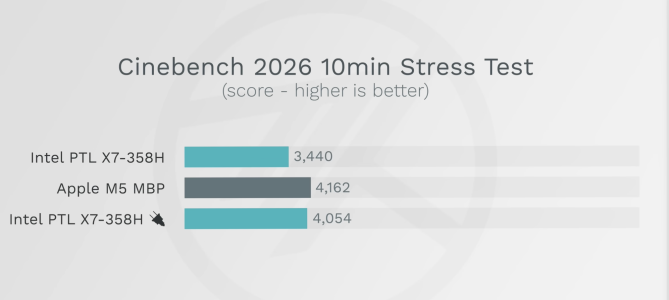

Cinebench 2026:

View attachment 138345

Gotta say Intel/AUUS still drops performance on battery by default, probably why it lost in the lightroom test.

poke01

Diamond Member

I mean laptop reviewers do check it to test performance degradation on battery but the 10 minutes test is a bit much.People benching nT perf on battery aren't serious and should consider different life decisions.

techjunkie123

Member

I'll bite. Why?People benching nT perf on battery aren't serious and should consider different life decisions.

I think even if it had been plugged in, it would still have lost the test.

MaxTech did some benchmarks with Panther Lake. I will post the relevant screenshots here, so you all don't need listen to the hyper voice every 2 seconds.

Lightroom:

View attachment 138344

Cinebench 2026:

View attachment 138345

Gotta say Intel/AUUS still drops performance on battery by default, probably why it lost in the lightroom test.

CouncilorIrissa

Senior member

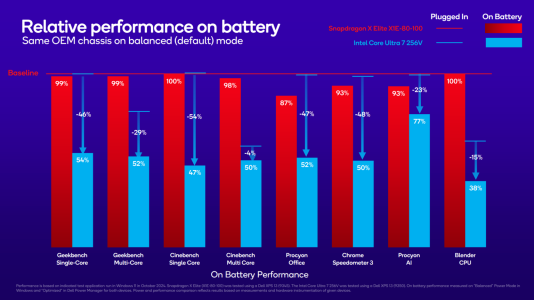

Two reasons:I'll bite. Why?

- Most people don't run nT-heavy workloads such as rendering on battery, mostly because the battery isn't going to last very long;

- The lightly threaded workloads actually drop much more perf on battery compared to heavy threaded.

Last edited:

poke01

Diamond Member

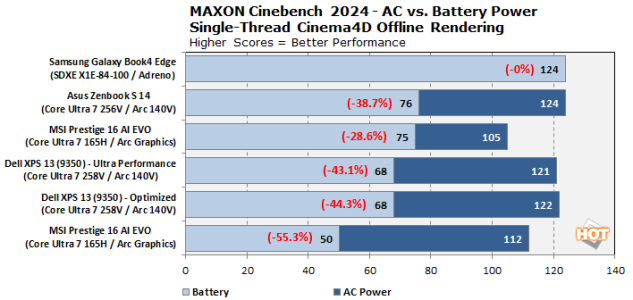

It’s not even rendering. Even basic stuff like Lightroom export is slower on battery and that also means video exports will be slower than when plugged in. Most people that buy these laptops will never change the power profiles and but they did pay for performance that they will never get on battery.Most people don't run nT-heavy workloads such as rendering on battery, mostly because the battery isn't going to last very long

It’s why I highlighted it. No one sane is going to be doing rendering on a tiny iGPU in a professional capacity but the Cinebench test highlights how even simple stuff is throttled when it doesn’t need to be.

Last edited:

johnsonwax

Senior member

Speak for yourself. Pretty nice gaming on my patio on my MBP, feet up. Battery lasts nearly all day, even under gaming load.Two reasons:

- Most people don't run nT-heavy workloads such as rendering on battery, mostly because the battery isn't going to last very long;

- The lightly threaded workloads actually drop much more perf on battery compared to heavy threaded.

I've mentioned before that I did a lot of data science in my career. You would be surprised how much of my work was done in conference rooms, on my patio, on airplanes, at conferences, etc. A lot of the reason why people don't run heavy workloads is because Windows laptops suck, not because they don't have a need to run heavy workloads.

techjunkie123

Member

Second point is valid. 1T should drop even less compared to nT IMO, not the other way around.Two reasons:

- Most people don't run nT-heavy workloads such as rendering on battery, mostly because the battery isn't going to last very long;

- The lightly threaded workloads actually drop much more perf on battery compared to heavy threaded.

I'm with this comment regarding the use of nT. I agree that no one is running cinememe on the go, but the reason why people don't run stuff (data science etc) on windows laptops is because they can't do it well unplugged. I personally do run heavy stuff on my Windows laptop unplugged, and it frustrates me that I have to plug it in to get the max performance.Speak for yourself. Pretty nice gaming on my patio on my MBP, feet up. Battery lasts nearly all day, even under gaming load.

I've mentioned before that I did a lot of data science in my career. You would be surprised how much of my work was done in conference rooms, on my patio, on airplanes, at conferences, etc. A lot of the reason why people don't run heavy workloads is because Windows laptops suck, not because they don't have a need to run heavy workloads.

Nothingness

Diamond Member

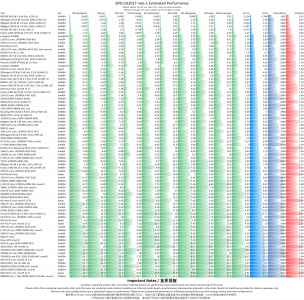

An interesting review of Cortex-X925 by Cheeps and Cheese:

chipsandcheese.com

chipsandcheese.com

Very strong integer performance and performance per clock. FP is just good (I guess 128-bit wide registers are the reason).

Arm's Cortex X925: Reaching Desktop Performance

A big, high performance core from Arm

Very strong integer performance and performance per clock. FP is just good (I guess 128-bit wide registers are the reason).

The heavy downgrade of 9800x3d spec scores between this comparison and original 9800x3d piece suggest they have changed something in the setup. [9900x also seems to score too high in fp part]An interesting review of Cortex-X925 by Cheeps and Cheese:

Arm's Cortex X925: Reaching Desktop Performance

A big, high performance core from Armchipsandcheese.com

Very strong integer performance and performance per clock. FP is just good (I guess 128-bit wide registers are the reason).

AMD's 9800X3D: 2nd Generation V-Cache

Following the first generation of V-Cache found in the Zen 3 and Zen 4 X3D SKUs, AMD is now following up with the second generation of V-Cache which is a major change for AMD in terms of packaging.

Maybe different reviewer.

Other than that, nice write up.

Nothingness

Diamond Member

In the older article the reviewer ran DDR5 6000. Here the reviewer runs DDR5 5600:The heavy downgrade of 9800x3d spec scores between this comparison and original 9800x3d piece suggest they have changed something in the setup. [9900x also seems to score too high in fp part]

AMD's 9800X3D: 2nd Generation V-Cache

Following the first generation of V-Cache found in the Zen 3 and Zen 4 X3D SKUs, AMD is now following up with the second generation of V-Cache which is a major change for AMD in terms of packaging.chipsandcheese.com

Maybe different reviewer.

Running Zen 5 with faster DDR5-6000 instead of DDR5-5600 memory can slightly increase its integer score, but only to 11.9

It's also possible both reviewers used different compiler and/or flags. Too bad neither of them provided that information.

Elfear

Diamond Member

An interesting review of Cortex-X925 by Cheeps and Cheese:

Arm's Cortex X925: Reaching Desktop Performance

A big, high performance core from Armchipsandcheese.com

Very strong integer performance and performance per clock. FP is just good (I guess 128-bit wide registers are the reason).

I must have been living in a hole but X925 seems rather amazing to be able to basically match AMD and Intel's best desktop chips (at least in SPEC) and at a much lower clock speed. It's crazy how much better it is than even Darkmont.

Am I misreading C&C's article? Is anyone else surprised at how performant X925 is?

CouncilorIrissa

Senior member

X925 is absolutely hueg of a core, so I'm not surprised to see it perform well, but I am surprised that it's this close in memory-latency sensitive benchmarks such as 520.omnetpp.I must have been living in a hole but X925 seems rather amazing to be able to basically match AMD and Intel's best desktop chips (at least in SPEC) and at a much lower clock speed. It's crazy how much better it is than even Darkmont.

Am I misreading C&C's article? Is anyone else surprised at how performant X925 is?

Huang's SPEC data seems to more or less align with CnC.

And that BPU is genuinely world-class, this level of perf in 541.leela is very impressive.

Saylick

Diamond Member

Dang, seeing X925 go toe-to-toe on IPC with Apple's latest P core is very impressive. I believe they're on the same node, too (N3P) so now I'm curious as to how they compare in power and area.X925 is absolutely hueg of a core, so I'm not surprised to see it perform well, but I am surprised that it's this close in memory-latency sensitive benchmarks such as 520.omnetpp.

Huang's SPEC data seems to more or less align with CnC.

And that BPU is genuinely world-class, this level of perf in 541.leela is very impressive.

View attachment 139198

LightningDust

Member

I must have been living in a hole but X925 seems rather amazing to be able to basically match AMD and Intel's best desktop chips (at least in SPEC) and at a much lower clock speed. It's crazy how much better it is than even Darkmont.

Am I misreading C&C's article? Is anyone else surprised at how performant X925 is?

Cortex-X gen-to-gen improvements have been pretty crazy for a while now.

CouncilorIrissa

Senior member

Judging by the D9500, the C1U is a bit of an incremental update over X925 (understandable really, can't keep making double digit-increases every year), but other than that yea, the Cortex X has been very impressive.Cortex-X gen-to-gen improvements have been pretty crazy for a while now.

Schmide

Diamond Member

I think it's neat and telling they went large private L2 with a small way to size ratio. 1:4 way:MB. In addition where apple has a 4-6 core to shared 12MB and size to way ratio is 1:1.

They are really stepping into and in some ways (pun) past x86 cache provisioning.

Though apple still has some advantages with their low latency L3 like L2.

They are really stepping into and in some ways (pun) past x86 cache provisioning.

Though apple still has some advantages with their low latency L3 like L2.

they have made quite some changes for an incremental upgradeJudging by the D9500, the C1U is a bit of an incremental update over X925 (understandable really, can't keep making double digit-increases every year), but other than that yea, the Cortex X has been very impressive.

khankhizer

Junior Member

Photonic chips like Q.ANT are interesting, but they’re not directly comparable to x86, ARM, or GPUs like NVIDIA Blackwell.

They’re usually highly specialized accelerators — potentially much faster for specific AI/math workloads — but far less flexible than general-purpose CPUs/GPUs. The real question is software ecosystem and real-world usability.

Would be interesting to see real benchmarks once silicon is widely tested.

They’re usually highly specialized accelerators — potentially much faster for specific AI/math workloads — but far less flexible than general-purpose CPUs/GPUs. The real question is software ecosystem and real-world usability.

Would be interesting to see real benchmarks once silicon is widely tested.

Odd considering it was the 1st consumer ARM chip with 6x 128 bit NEON/SVE ALUs.Very strong integer performance and performance per clock. FP is just good (I guess 128-bit wide registers are the reason).

Nothingness

Diamond Member

There might several explanations to that:Odd considering it was the 1st consumer ARM chip with 6x 128 bit NEON/SVE ALUs.

- the Ryzen still has wider total FP datapaths

- better vectorization (compiler and/or flags); instructions retired for cactubssn, roms (this one likely uses AVX-512 given the difference between Ryzen and Lion Cove), fotonik3d and wrf seem to show that

- few or none of the workloads can fully exploit 6 data paths, or the X925 dispatch engine is not up to the task for feeding 6 units.

Just guessing, I'm not familiar with SPEC FP.

While both x86 vendors keep spending transistors on adding throughput - fully 512b FP infrastructure, single-purpose ISA extensions, and advances in SMT. ARM still goes for the general performance. Is this take correct?I must have been living in a hole but X925 seems rather amazing to be able to basically match AMD and Intel's best desktop chips (at least in SPEC) and at a much lower clock speed. It's crazy how much better it is than even Darkmont.

Is there a die size comparison between X925 (or newer) and Zen 5/AVX 512 Intel?

adroc_thurston

Diamond Member

Zen5 is just a chonkier core FP-wise, with 384entry FP PRF and stuff.There might several explanations to that:

- the Ryzen still has wider total FP datapaths

- better vectorization (compiler and/or flags); instructions retired for cactubssn, roms (this one likely uses AVX-512 given the difference between Ryzen and Lion Cove), fotonik3d and wrf seem to show that

- few or none of the workloads can fully exploit 6 data paths, or the X925 dispatch engine is not up to the task for feeding 6 units.

Just guessing, I'm not familiar with SPEC FP.

No.While both x86 vendors keep spending transistors on adding throughput - fully 512b FP infrastructure, single-purpose ISA extensions, and advances in SMT

No.ARM still goes for the general performance. Is this take correct?

AMD goes for server workload performance.

That's the throughput part.AMD goes for server workload performance.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-