adroc_thurston

Diamond Member

Nope!That's the throughput part.

Most server workloads are NOT that. at all.

Nope!That's the throughput part.

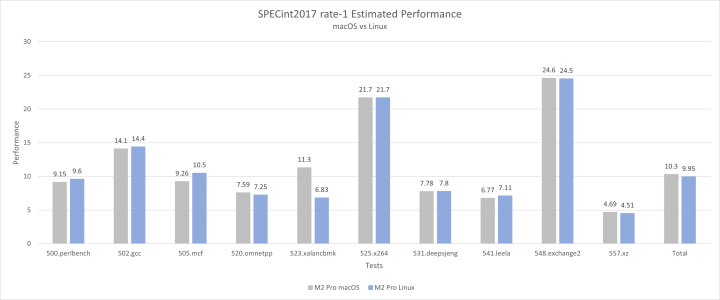

this was a Linux vs macOS SPEC17 comparison. macOS is 4% faster in SPEC.Skymont on decent bit behind node refinement and horrible uncore we don't have an Apples to Apple comparison pun not intended insert core on windows vs core on MacOS.

well the issue is he is using -O3 with clang vs David Huang using GCC 12 and and -O2 flag matters and we won't know how the power measurements were taken Apple Power metrics shouldn't be taken at face value.this was a Linux vs macOS SPEC17 comparison. macOS is 4% faster in SPEC.

View attachment 140355

But the main point is the M core uses only 2.5 watts to reach 10.5 points in SPEC Int. I don't think theres any core from others that comes close to that perf/w yet.

Very symmetric across all resources which allows them to implement a very basic form of SMT by simply statically partitioning everything in half.

he also tested gaming and Strix Halo won there. That’s embarrassing for Apple considering RDNA3.5 is one generation behind.

Halo is using a slightly tweaked 2022 architecture with about half the memory bandwidth of the 32 GPU core M5 Max. And it's on 4nm instead of 3nm. But I guess ~40W can solve lots of problems.he also tested gaming and Strix Halo won there. That’s embarrassing for Apple considering RDNA3.5 is one generation behind.

Doesn't Apple Silicon being TBDR means benchmarking the same titles that has a native Mac port being better? Also Apple Silicon relies on on chip bandwidth for GPU bandwidth rather than DRAM isn't it?he also tested gaming and Strix Halo won there. That’s embarrassing for Apple considering RDNA3.5 is one generation behind.

What? No.Also Apple Silicon relies on on chip bandwidth for GPU bandwidth rather than DRAM isn't it?

yeah TBDR is a problem. Almost all AAA games are made for IMR.Doesn't Apple Silicon being TBDR means benchmarking the same titles that has a native Mac port being better? Also Apple Silicon relies on on chip bandwidth for GPU bandwidth rather than DRAM isn't it?

Different graphics rendering technique, TBDR relies on advanced on chip cache bandwidth and efficiency for rendering workloads/tiles and passes before being read/written to DRAM is what I'm saying, unlike IMR that AMD uses for Strix HaloWhat? No.

In Apple Silicon the DRAM is on-package, not on-chip. It is technically no different from DRAM soldered to the motherboard like most laptops, except that you get some power and space savings.

Didn't Apple themselves already promote the M5 gaming performance gains on Cyberpunk? They should just benchmark the native Metal 3 version of the port vs Strix Halo until they hopefully update the old game to Metal 4yeah TBDR is a problem. Almost all AAA games are made for IMR.

IMR's havent been IMR's for like 10+ years , they have binned things for ages.Different graphics rendering technique, TBDR relies on advanced on chip cache bandwidth and efficiency for rendering workloads/tiles and passes before being read/written to DRAM is what I'm saying, unlike IMR that AMD uses for Strix Halo

True but its still not what Apple uses so direct comparison is still literally apple to oranges unless its native port versions of the same title vs PC in a benchmark imoIMR's havent been IMR's for like 10+ years , they have binned things for ages.

In Blender, the basic M5 outperforms the Strix Halo. There are still plenty of ways to optimise performance for gaming, but unfortunately the developers aren’t making full use of them.he also tested gaming and Strix Halo won there. That’s embarrassing for Apple considering RDNA3.5 is one generation behind.

In Blender, the basic M5 outperforms the Strix Halo. There are still plenty of ways to optimise performance for gaming, but unfortunately the developers aren’t making full use of them.

even PC doesn't good game ports 🤣 🤣 🤣Idk how accurate this guy is, but it suggests that games needs a good port or the games will only use half of the GPU's power

i know it does in blender but the 3nm M5 shader core is weaker than a RDNA 3.5 on 4nm in pure raster but this only matters for old games. I bet if RT was enabled here, M5 Max would be much faster.In Blender, the basic M5 outperforms the Strix Halo. There are still plenty of ways to optimise performance for gaming, but unfortunately the developers aren’t making full use of them.

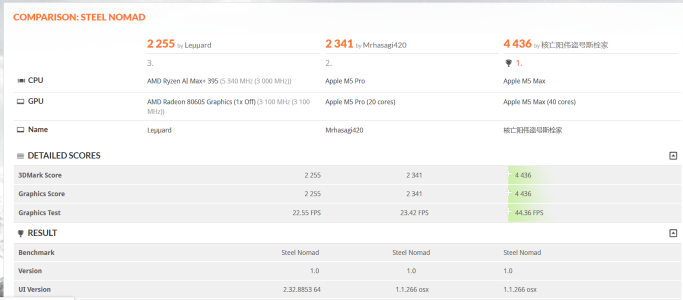

The 8060s performs on par with the M5 Pro in Steel Nomad. I think the issue is not with the M5’s shader cores (they’re not weak) but with game optimisation. The reason Blender works so well is not only down to Apple’s superior RT implementation, but also to excellent optimisation and a well-implemented Metal backend. Apple has assigned a dedicated team of engineers to work on optimising Blender for Mac. If Apple had formed a separate engineering team to optimise game ports for Mac, the results would have been better.i know it does in blender but the 3nm M5 shader core is weaker than a RDNA 3.5 on 4nm in pure raster but this only matters for old games. I bet if RT was enabled here, M5 Max would be much faster.

All AAA games released this year use RT and the PS6 AAA games will use even more demanding RT.

If only Apple acquired a game studio from back then lolThe 8060s performs on par with the M5 Pro in Steel Nomad. I think the issue is not with the M5’s shader cores (they’re not weak) but with game optimisation. The reason Blender works so well is not only down to Apple’s superior RT implementation, but also to excellent optimisation and a well-implemented Metal backend. Apple has assigned a dedicated team of engineers to work on optimising Blender for Mac. If Apple had formed a separate engineering team to optimise game ports for Mac, the results would have been better.

View attachment 140534

Result

www.3dmark.com

That is an impressive and detailed analysis. I am puzzled, given how much he assesses LLM performance implications, that he doesn’t even mention the neural accelerators, let alone test them.

Apple M5 GPU Roofline Analysis

In this deep dive, we will examine M5's GPU performance across various workloads. TLDR: It can be very powerful if the programmer knows how to use it correctly.www.michaelstinkerings.org

Idk how accurate this guy is, but it suggests that games needs a good port or the games will only use half of the GPU's power

If you mean the ANE, its not part of the GPU die that's whyThat is an impressive and detailed analysis. I am puzzled, given how much he assesses LLM performance implications, that he doesn’t even mention the neural accelerators, let alone test them.